Many online assessments adapt paper exams into multiple-choice questions (MCQs), but this format frequently fails to meet educational standards of authenticity in assessment.1 Instead, advanced question types allow students to engage with online assessments more authentically.

Coining the term ‘authentic assessment,’ educational researcher Grant Wiggins writes: "Authentic assessments require students to be effective performers with acquired knowledge. Traditional tests tend to reveal only whether the student can recognize, recall or “plug in” what was learned out of context."2 We evaluate the limitations of multiple-choice formatting within this dichotomy by discerning six key reasons why not to rely on only multiple-choice for authentic assessment.

These Six Reasons Include:

- Guessing answers: Students may get an answer right by chance rather than by solving through applied steps.

- Teaching irrelevant methods: Instead of looking at the problem and solving it from scratch, students may look at the answers and select the most plausible answer.

- Students' notation is not tested, as significant numbers and metrics are pre-selected.

- Steps of working are not tested and not seen, restricting ability to evaluate where a student went wrong.

- Inability to randomize questions, so students fail to receive the benefit of learning from multiple variants.

- Longer exam creation time, generating multiple questions adds time and work for the writers of exams.

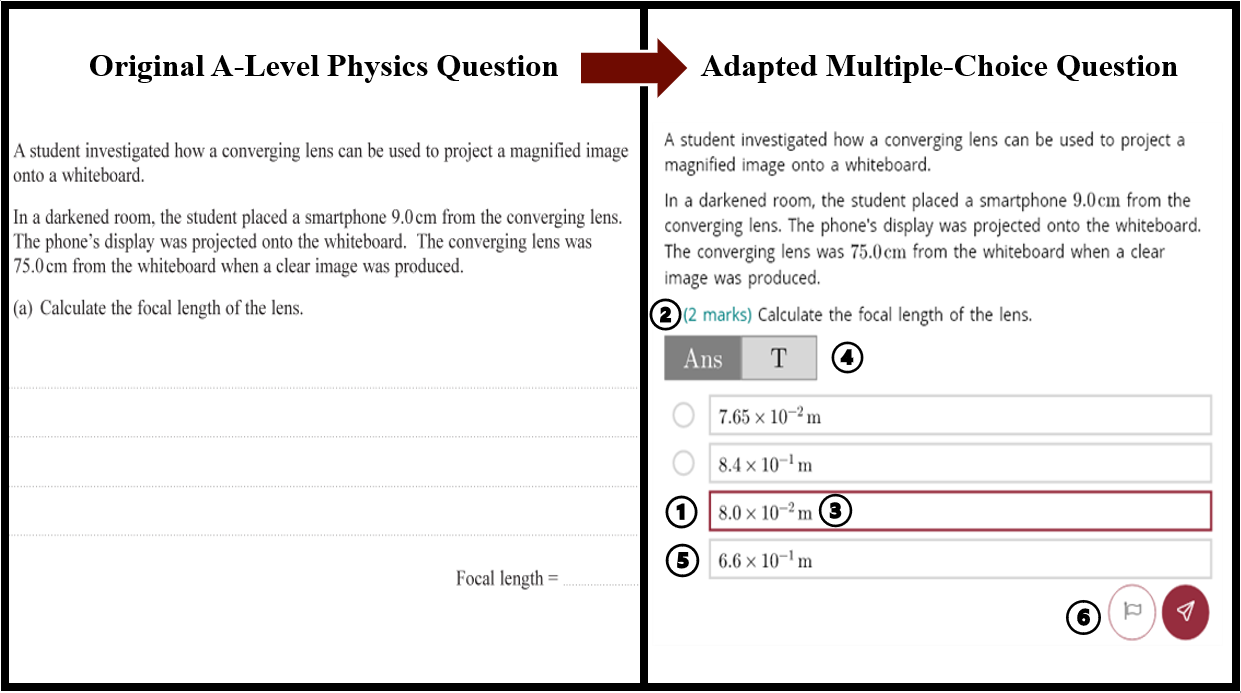

Six Reasons in Practice

- Allows students to guess the correct answer.

- Eliminates the ‘scratch’ area so students can apply irrelevant methods to select an answer.

- Students are not tested for notation when units (m) are pre-selected in MCQ format.

- No steps of work are documented in MCQ, unlike in the original exam.

- Pre-selected answer options restrict the ability to randomize the question beyond the initial MCQ adaptation.

- Comparing question designs and creation time, the MCQ requires three additional and believable options to be created in addition to the correct answer.

Advanced Question Types for Authenticity

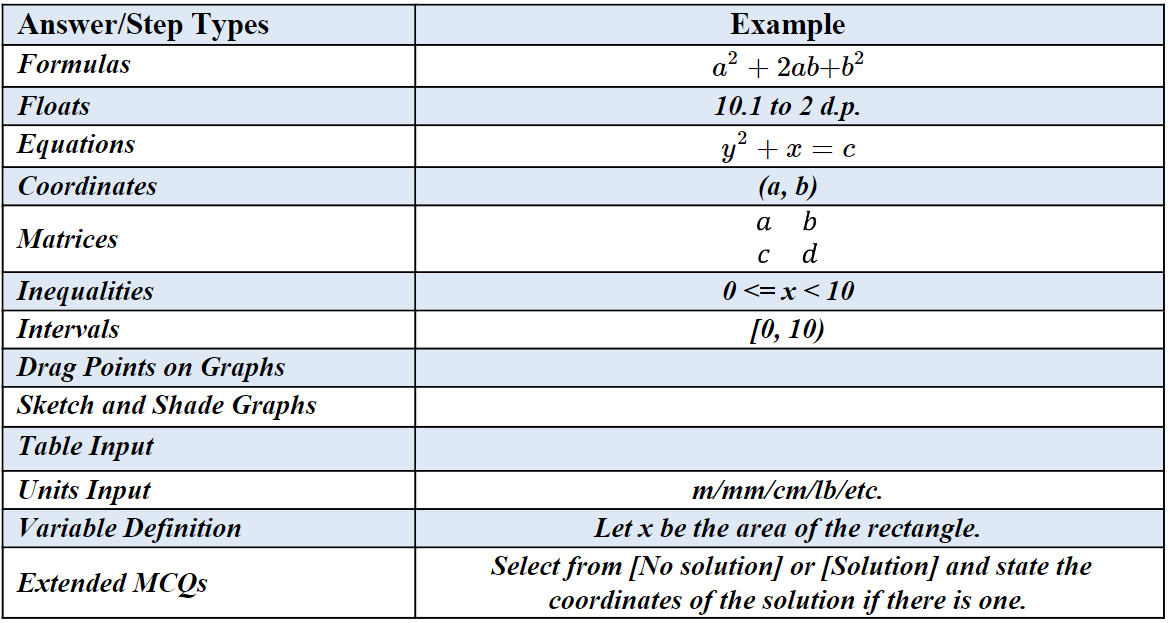

To provide authentic quantitative assessments online for the range of paper assessments we see today, it is imperative to provide a range of answering methods.

We focus on question types with answers that can be reliably assessed by a computer. A symbolic algebra engine processes student answers to allow for correct answers to be marked as correct even when expressed in different forms. Our sophisticated question types ensure that students are compelled to provide answers with precise notation and units, so our platform can mark each component of an answer like a teacher would.

Our mission is to preserve authenticity by continually expanding our platform's capabilities to include more question types, so educators can ask the questions they want to ask. The current range includes:

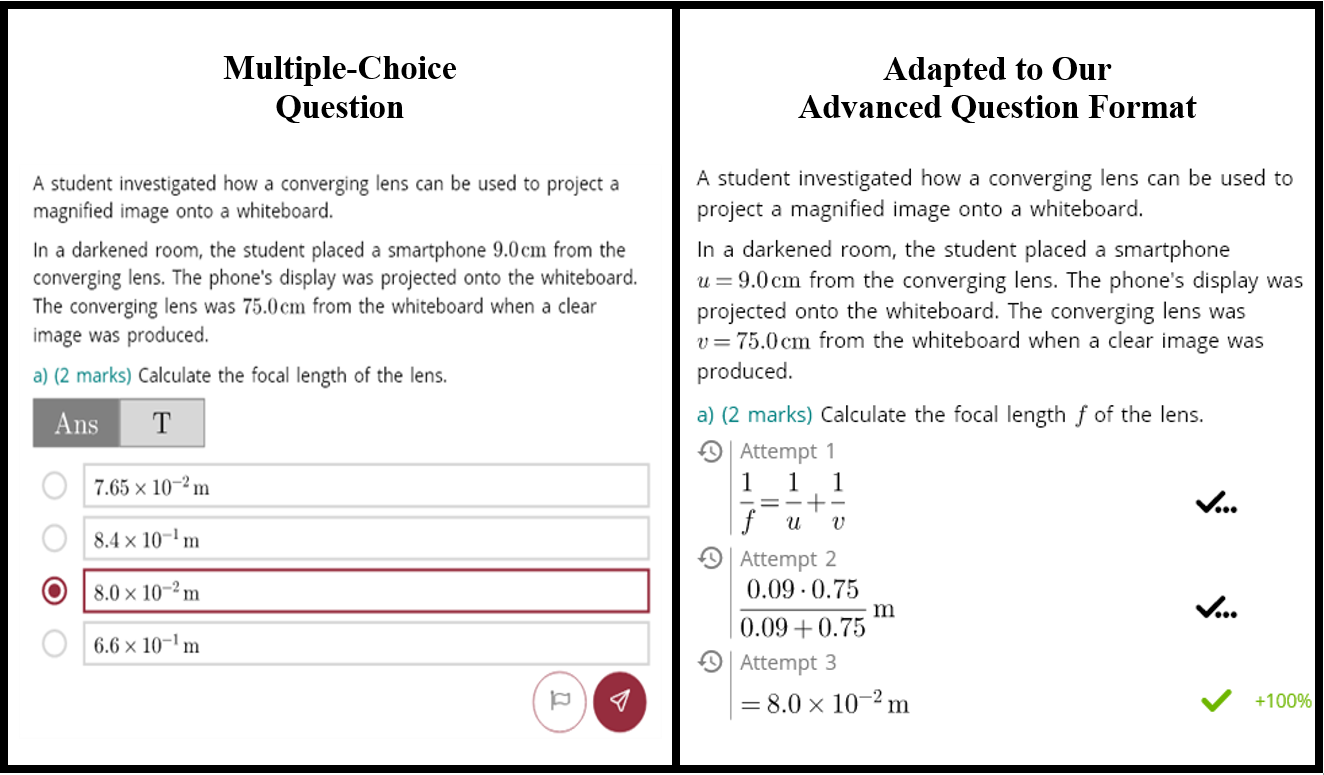

A demonstration of how this range can enhance the authenticity of an assessment beyond MCQ is shown below. The comparison shows how our advanced question types overcome the limitations of multiple-choice. Using our ‘float’ feature, students can calculate and input a precise answer:

Advanced Question Types for Enhanced Assessment

Adapting paper exams online, we certify that the quality of our questions is enhanced and not reduced through our platform's advanced capabilities for authentic assessment and automated marking. By design, these capabilities anticipate the limitations of online multiple-choice assessment by preserving as much as possible the original intelligence of paper exams. Such advancements prompt students to authentically engage and apply acquired knowledge online rather than to simply ‘plug-in’ answers out of context.

-

Loepp, E. (2021) The Benefits of Higher-Order Multiple-Choice Tests, Inside Higher Ed: Times Higher Education, https://www.insidehighered.com/advice/2021/06/23/rethinking-multiple-choice-tests-better-learning-assessment-opinion.↩

-

Wiggins, G. (2019). The Case for Authentic Assessment, Practical Assessment, Research and Evaluation: Vol 2, Article 2. https://doi.org/10.7275/ffb1-mm19.↩